If you have date in below format,

10-FEB-13 02:10:09 i.e. dd-MMM-yy HH:mm:ss

then below code will be used,

from_unixtime(unix_timestamp(YOURDATE,’dd-MMM-yy HH:mm:ss’))

13 Friday Mar 2015

Posted in BigData

If you have date in below format,

10-FEB-13 02:10:09 i.e. dd-MMM-yy HH:mm:ss

then below code will be used,

from_unixtime(unix_timestamp(YOURDATE,’dd-MMM-yy HH:mm:ss’))

04 Wednesday Mar 2015

Tags

10 big data, Ambari, analyzing tweets, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, flume, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, reading tweets, running hortonworks, twitter

Reading Tweets from Twitter requires Flume setup. I am using Hortonworks2.2 and i have Flume already installed on my sandbox.

If you don’t have Flume install it by,

/>yum flume install

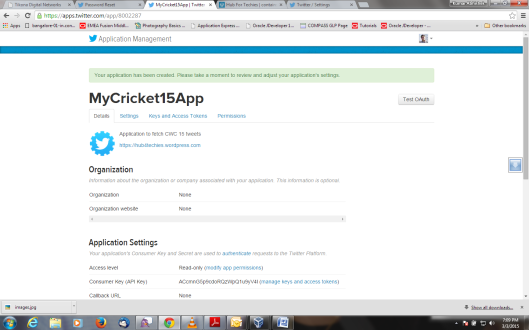

Next Step after flume installation is to make a Twitter Developer Account. Follow below steps,

Assuming you have already logged-in. Then it will take you to this page. If you have applications already created then it will list applications.

———————————————————flume.conf—————————

TwitterAgent.sources = Twitter

TwitterAgent.channels = MemChannel

TwitterAgent.sinks = HDFS

TwitterAgent.sources.Twitter.type = com.cloudera.flume.source.TwitterSource

TwitterAgent.sources.Twitter.channels = MemChannel

TwitterAgent.sources.Twitter.consumerKey = <>

TwitterAgent.sources.Twitter.consumerSecret = <>

TwitterAgent.sources.Twitter.accessToken = <>

TwitterAgent.sources.Twitter.accessTokenSecret = <>

TwitterAgent.sources.Twitter.keywords = #CWC15

TwitterAgent.sinks.HDFS.channel = MemChannel

TwitterAgent.sinks.HDFS.type = hdfs

TwitterAgent.sinks.HDFS.hdfs.path = hdfs://sandbox.hortonworks.com:8020/user/tweets/%Y/%m/%d/%H/

TwitterAgent.sinks.HDFS.hdfs.fileType = DataStream

TwitterAgent.sinks.HDFS.hdfs.writeFormat = Text

TwitterAgent.sinks.HDFS.hdfs.batchSize = 1000

TwitterAgent.sinks.HDFS.hdfs.rollSize = 0

TwitterAgent.sinks.HDFS.hdfs.rollCount = 10000

TwitterAgent.channels.MemChannel.type = memory

TwitterAgent.channels.MemChannel.capacity = 10000

TwitterAgent.channels.MemChannel.transactionCapacity = 100

——————————————————ends——————————

flume.conf is located at <flume_home>/conf/

Keywords could be what exactly you want to fetch from Twitter. i have entered #CWC15 i.e. Cricket World Cup 2015 related tweets

Path must be correct to store data. HDFS Path information you can find in site-core.xml. I have created /tweets folder in /user directory to store tweets.

->add MVN to your path

->add JAVA_HOME to your path

->go to twitter source folder

->run “mvn package” command

it will build the jar for you.

/> cp <jarlocation>/flumejarname.jar /<flume_home>/lib/

/> export CLASSPATH=/lib/*

You have source jar ready.

You have flume setup ready and jar files are on classpath.

twitter access keys are configured.

above command copies output to flume_twitteragent.log file which helps in debugging in case if there is an error occured.

Now open Browser to see the tweets as below,

open http://localhost:8000/filebrowser/#/user/tweets

and then browse into the folder

there are so many tweets listed. Have fun!

Follow me for analyzing these tweets and generating reports from this one.

02 Monday Mar 2015

Posted in BigData

Tags

10 big data, Ambari, analyzing tweets, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, flume, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, reading tweets, running hortonworks, twitter

Follow below link,

27 Friday Feb 2015

Posted in BigData

Tags

10 big data, Ambari, analyzing tweets, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, flume, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, reading tweets, running hortonworks, twitter

Problem Statement – Delhi Election has been done. There were around 250 candidates contesting for 70 constituencies. Election Commissioner want to calculate votes and declare results in a day.

Points,

1. There are many constituencies where candidates from each political party are contesting.

2. Every vote is done via Voting machine which generates a record i.e. CandidateName,PoliticalPartyName,Constituency

3. All votes are recorded in flat files and available in storage media.

Solution –

1. Attach storage media to the machine which has big data environment(sandbox) installed.

2. Mount storage media as a secondary storage in sandbox.

3. Copy flat files(votes) to HDFS.

4. Create MapReduce function using JDeveloper and run on flat files.

5. Import generated reduced output to hive table.

6. Clean data to make it ready for queries.

7. Run HiveQL to generate winning candidates for each constituency.

Code Sample Attached Below(Please download file and change extension to .zip and then extract),

Includes – JDeveloper Project with MapReduce Code, Sample Data, Queries Used for data processing.

Detailed Steps for the solution –

27 Friday Feb 2015

Posted in BigData

Tags

Ambari, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, running hortonworks, [Edit]Tags10 big data

Follow Setting Up MySQL Database for Big Data for the example

Create table test(ename varchar(20));

insert into test values(‘aaa1’);

insert into test values(‘aaa2’);

insert into test values(‘aaa3’);

insert into test values(‘aaa4’);

insert into test values(‘aaa5’);

insert into test values(‘aaa5’);

hive>sqoop import –connect jdbc:mysql://localhost:3306/test –username demo –password welcome1

–table test –hive-import –create-hive-table -m 1

it will create a table in hive with name test and import records from test table created in mysql.

hive>show tables;

it will show list of tables. check test table must be there in the list;

hive> select * from test;

it will list all records from test table.

27 Friday Feb 2015

Posted in BigData

Tags

Ambari, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, running hortonworks, [Edit]Tags10 big data

Apache Sqoop(TM) is a tool designed for efficiently transferring bulk data between Apache Hadoop and structured datastores such as relational databases.

Sqoop is a command-line interface application for transferring data between relational databases and Hadoop. It supports incremental loads of a single table or a free form SQL query as well as saved jobs which can be run multiple times to import updates made to a database since the last import.

27 Friday Feb 2015

Posted in BigData

Tags

Ambari, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, running hortonworks, [Edit]Tags10 big data

Step 1: Let’s create a directory in HDFS, upload a file and list.

Let’s look at the syntax first:

hadoop fs -mkdir:

Usage:

hadoop fs -mkdir <paths>

Example:

hadoop fs -mkdir /user/hadoop/dir1 /user/hadoop/dir2

hadoop fs -mkdir hdfs://nn1.example.com/user/hadoop/dir

hadoop fs -ls:

Usage:

hadoop fs -ls <args>

Example:

hadoop fs -ls /user/hadoop/dir1 /user/hadoop/dir2

hadoop fs -ls /user/hadoop/dir1/filename.txt

hadoop fs -ls hdfs://<hostname>:9000/user/hadoop/dir1/

Let’s use the following commands as follows and execute. You can ssh to the sandbox using Tools like Putty. You could download putty.exe from the internet.

Let’s touch a file locally.

$ touch filename.txt

Step 2: Now, let’s check how to find out space utilization in a HDFS dir.

hadoop fs -du:

Usage:

hadoop fs -du URI

Example:

hadoop fs -du /user/hadoop/ /user/hadoop/dir1/Sample.txt

Step 4:

Now let’s see how to upload and download files from and to Hadoop Data File System(HDFS)

Upload: ( we have already tried this earlier)

hadoop fs -put:

Usage:

hadoop fs -put <localsrc> … <HDFS_dest_Path>

Example:

hadoop fs -put /home/ec2-user/Samplefile.txt ./ambari.repo /user/hadoop/dir3/

Download:

hadoop fs -get:

Usage:

hadoop fs -get <hdfs_src> <localdst>

Example:

hadoop fs -get /user/hadoop/dir3/Samplefile.txt /home/

Step 5: Let’s look at quickly two advanced features.

hadoop fs -getmerge

Usage:

hadoop fs -getmerge <src> <localdst> [addnl]

Example:

hadoop fs -getmerge /user/hadoop/dir1/ ./Samplefile2.txt

Option:

addnl: can be set to enable adding a newline on end of each file

hadoop distcp:

Usage:

hadoop distcp <srcurl> <desturl>

Example:

hadoop distcp hdfs://<NameNode1>:8020/user/hadoop/dir1/ \

hdfs://<NameNode2>:8020/user/hadoop/dir2/

You could use the following steps to perform getmerge and discp.

Let’s upload two files for this exercise first:

# touch txt1 txt2

# hadoop fs -put txt1 txt2 /user/hadoop/dir2/

# hadoop fs -ls /user/hadoop/dir2/

Step 6:Getting help

You can use Help command to get list of commands supported by Hadoop Data File System(HDFS)

Example:

hadoop fs -help

Hope this short tutorial was useful to get the basics of file management.

The Hadoop shell is a family of commands that you can run from your operating system’s command line. The shell has two sets of commands: one for file manipulation (similar in purpose and syntax to Linux commands that many of us know and love) and one for Hadoop administration. The following table summarizes the first set of commands for you.

| Command | What It Does | Usage | Examples |

| dcat | Copies source paths tostdout. | hdfs dfs -cat URI [URI …] | hdfs dfs -cat hdfs:// <path>/file1; hdfs dfs -cat file:///file2 /user/hadoop/file3 |

| chgrp | Changes the group association of files. With -R, makes the change recursively by way of the directory structure. The user must be the file owner or the superuser. | hdfs dfs -chgrp [-R] GROUP URI [URI …] | |

| chmod | Changes the permissions of files. With -R, makes the change recursively by way of the directory structure. The user must be the file owner or the superuser. | hdfs dfs -chmod [-R] <MODE[,MODE]… | OCTALMODE> URI [URI …] | hdfs dfs -chmod 777 test/data1.txt |

| chown | Changes the owner of files. With -R, makes the change recursively by way of the directory structure. The user must be the superuser. | hdfs dfs -chown [-R] [OWNER][:[GROUP]] URI [URI ] | hdfs dfs -chown -R hduser2 /opt/hadoop/logs |

| copyFromLocal | Works similarly to the putcommand, except that the source is restricted to a local file reference. | hdfs dfs -copyFromLocal <localsrc> URI | hdfs dfs -copyFromLocal input/docs/data2.txt hdfs://localhost/user/ rosemary/data2.txt |

| copyToLocal | Works similarly to the getcommand, except that the destination is restricted to a local file reference. | hdfs dfs -copyToLocal [-ignorecrc] [-crc] URI <localdst> | hdfs dfs -copyToLocal data2.txt data2.copy.txt |

| count | Counts the number of directories, files, and bytes under the paths that match the specified file pattern. | hdfs dfs -count [-q] <paths> | hdfs dfs -count hdfs://nn1.example.com/ file1 hdfs://nn2.example.com/ file2 |

| cp | Copies one or more files from a specified source to a specified destination. If you specify multiple sources, the specified destination must be a directory. | hdfs dfs -cp URI [URI …] <dest> | hdfs dfs -cp /user/hadoop/file1 /user/hadoop/file2 /user/hadoop/dir |

| du | Displays the size of the specified file, or the sizes of files and directories that are contained in the specified directory. If you specify the -s option, displays an aggregate summary of file sizes rather than individual file sizes. If you specify the -h option, formats the file sizes in a “human-readable” way. | hdfs dfs -du [-s] [-h] URI [URI …] | hdfs dfs -du /user/hadoop/dir1 /user/hadoop/file1 |

| dus | Displays a summary of file sizes; equivalent to hdfs dfs -du –s. | hdfs dfs -dus <args> | |

| expunge | Empties the trash. When you delete a file, it isn’t removed immediately from HDFS, but is renamed to a file in the/trash directory. As long as the file remains there, you can undelete it if you change your mind, though only the latest copy of the deleted file can be restored. | hdfs dfs –expunge | |

| get | Copies files to the local file system. Files that fail a cyclic redundancy check (CRC) can still be copied if you specify the -ignorecrcoption. The CRC is a common technique for detecting data transmission errors. CRC checksum files have the .crc extension and are used to verify the data integrity of another file. These files are copied if you specify the -crc option. | hdfs dfs -get [-ignorecrc] [-crc] <src> <localdst> | hdfs dfs -get /user/hadoop/file3 localfile |

| getmerge | Concatenates the files insrc and writes the result to the specified local destination file. To add a newline character at the end of each file, specify theaddnl option. | hdfs dfs -getmerge <src> <localdst> [addnl] | hdfs dfs -getmerge /user/hadoop/mydir/ ~/result_file addnl |

| ls | Returns statistics for the specified files or directories. | hdfs dfs -ls <args> | hdfs dfs -ls /user/hadoop/file1 |

| lsr | Serves as the recursive version of ls; similar to the Unix command ls -R. | hdfs dfs -lsr <args> | hdfs dfs -lsr /user/ hadoop |

| mkdir | Creates directories on one or more specified paths. Its behavior is similar to the Unix mkdir -p command, which creates all directories that lead up to the specified directory if they don’t exist already. | hdfs dfs -mkdir <paths> | hdfs dfs -mkdir /user/hadoop/dir5/temp |

| moveFromLocal | Works similarly to the putcommand, except that the source is deleted after it is copied. | hdfs dfs -moveFromLocal <localsrc> <dest> | hdfs dfs -moveFromLocal localfile1 localfile2 /user/hadoop/hadoopdir |

| mv | Moves one or more files from a specified source to a specified destination. If you specify multiple sources, the specified destination must be a directory. Moving files across file systems isn’t permitted. | hdfs dfs -mv URI [URI …] <dest> | hdfs dfs -mv /user/hadoop/file1 /user/hadoop/file2 |

| put | Copies files from the local file system to the destination file system. This command can also read input fromstdin and write to the destination file system. | hdfs dfs -put <localsrc> … <dest> | hdfs dfs -put localfile1 localfile2 /user/hadoop/hadoopdir; hdfs dfs -put – /user/hadoop/hadoopdir (reads input from stdin) |

| rm | Deletes one or more specified files. This command doesn’t delete empty directories or files. To bypass the trash (if it’s enabled) and delete the specified files immediately, specify the -skipTrashoption. | hdfs dfs -rm [-skipTrash] URI [URI …] | hdfs dfs -rm hdfs://nn.example.com/ file9 |

| rmr | Serves as the recursive version of –rm. | hdfs dfs -rmr [-skipTrash] URI [URI …] | hdfs dfs -rmr /user/hadoop/dir |

| setrep | Changes the replication factor for a specified file or directory. With -R, makes the change recursively by way of the directory structure. | hdfs dfs -setrep <rep> [-R] <path> | hdfs dfs -setrep 3 -R /user/hadoop/dir1 |

| stat | Displays information about the specified path. | hdfs dfs -stat URI [URI …] | hdfs dfs -stat /user/hadoop/dir1 |

| tail | Displays the last kilobyte of a specified file to stdout. The syntax supports the Unix -foption, which enables the specified file to be monitored. As new lines are added to the file by another process, tail updates the display. | hdfs dfs -tail [-f] URI | hdfs dfs -tail /user/hadoop/dir1 |

| test | Returns attributes of the specified file or directory. Specifies -e to determine whether the file or directory exists; -z to determine whether the file or directory is empty; and -d to determine whether the URI is a directory. | hdfs dfs -test -[ezd] URI | hdfs dfs -test /user/hadoop/dir1 |

| text | Outputs a specified source file in text format. Valid input file formats are zip andTextRecordInputStream. | hdfs dfs -text <src> | hdfs dfs -text /user/hadoop/file8.zip |

| touchz | Creates a new, empty file of size 0 in the specified path. | hdfs dfs -touchz <path> | hdfs dfs -touchz /user/hadoop/file12 |

Any Hadoop administrator worth his salt must master a comprehensive set of commands for cluster administration. The following table summarizes the most important commands. Know them, and you will advance a long way along the path to Hadoop wisdom.

| Command | What It Does | Syntax | Example |

| balancer | Runs the cluster-balancing utility. The specified threshold value, which represents a percentage of disk capacity, is used to overwrite the default threshold value (10 percent). To stop the rebalancing process, press Ctrl+C. | hadoop balancer [-threshold <threshold>] |

hadoop balancer -threshold 20 |

| daemonlog | Gets or sets the log level for each daemon (also known as a service). Connects tohttp://host:port/ logLevel?log=name and prints or sets the log level of the daemon that’s running athost:port. Hadoop daemons generate log files that help you determine what’s happening on the system, and you can use thedaemonlog command to temporarily change the log level of a Hadoop component when you’re debugging the system. The change becomes effective when the daemon restarts. |

hadoop daemonlog -getlevel <host:port> <name>; hadoop daemonlog -setlevel <host:port> <name> <level> |

hadoop daemonlog -getlevel 10.250.1.15:50030 org.apache.hadoop. mapred.JobTracker; hadoop daemonlog -setlevel 10.250. 1.15:50030 org.apache.hadoop. mapred.JobTracker DEBUG |

| datanode | Runs the HDFS DataNode service, which coordinates storage on each slave node. If you specify -rollback, the DataNode is rolled back to the previous version. Stop the DataNode and distribute the previous Hadoop version before using this option. | hadoop datanode [-rollback] |

hadoop datanode – rollback |

| dfsadmin | Runs a number of Hadoop Distributed File System (HDFS) administrative operations. Use the -helpoption to see a list of all supported options. The generic options are a common set of options supported by several commands. | hadoop dfsadmin [GENERIC_ OPTIONS] [-report] [-safemode enter | leave | get | wait] [-refreshNodes] [-finalize Upgrade] [-upgrade Progress status | details | force] [-metasave filename] [-setQuota <quota> <dirname>…<dirname>] [-clrQuota <dirname> …<dirname>] [-restoreFailed Storagetrue|false |check] [-help [cmd]] |

|

| mradmin | Runs a number of MapReduce administrative operations. Use the -helpoption to see a list of all supported options. Again, the generic options are a common set of options that are supported by several commands. If you specify -refreshServiceAcl, reloads the service-level authorization policy file (JobTracker reloads the authorization policy file); -refreshQueues reloads the queue access control lists (ACLs) and state (JobTracker reloads the mapred-queues.xml file); -refreshNodes refreshes the hosts information at the JobTracker; -refreshUserToGroups Mappings refreshes user-to-groups mappings; -refreshSuperUserGroups Configuration refreshes superuser proxy groups mappings; and -help [cmd] displays help for the given command or for all commands if none is specified. |

hadoop mradmin [ GENERIC_OPTIONS ] [-refreshServiceAcl] [-refreshQueues] [-refreshNodes] [-refreshUserTo GroupsMappings] [- refreshSuper UserGroups Configuration] [-help [cmd]] |

hadoop mradmin -help –refreshNodes |

| jobtracker | Runs the MapReduce JobTracker node, which coordinates the data processing system for Hadoop. If you specify -dumpConfiguration, the configuration that’s used by the JobTracker and the queue configuration in JSON format are written to standard output. | hadoop jobtracker [-dump Configuration] |

hadoop jobtracker – dumpConfiguration |

| namenode | Runs the NameNode, which coordinates the storage for the whole Hadoop cluster. If you specify -format, the NameNode is started, formatted, and then stopped; with -upgrade, the NameNode starts with the upgrade option after a new Hadoop version is distributed; with -rollback, the NameNode is rolled back to the previous version (remember to stop the cluster and distribute the previous Hadoop version before using this option); with -finalize, the previous state of the file system is removed, the most recent upgrade becomes permanent, rollback is no longer available, and the NameNode is stopped; finally, with -importCheckpoint, an image is loaded from the checkpoint directory (as specified by thefs.checkpoint.dirproperty) and saved into the current directory. | hadoop namenode [-format] | [-upgrade] | [-rollback] | [-finalize] | [-import Checkpoint] |

hadoop namenode – finalize |

| Secondary namenode |

Runs the secondary NameNode. If you specify -checkpoint, a checkpoint on the secondary NameNode is performed if the size of the EditLog (a transaction log that records every change that occurs to the file system metadata) is greater than or equal tofs.checkpoint.size; specify -force and a checkpoint is performed regardless of the EditLog size; specify –geteditsizeand the EditLog size is printed. | hadoop secondary namenode [-checkpoint [force]] | [-geteditsize] |

hadoop secondarynamenode –geteditsize |

| tasktracker | Runs a MapReduce TaskTracker node. | hadoop tasktracker |

hadoop tasktracker |

The dfsadmin tools are a specific set of tools designed to help you root out information about your Hadoop Distributed File system (HDFS). As an added bonus, you can use them to perform some administration operations on HDFS as well.

| Option | What It Does |

| -report | Reports basic file system information and statistics. |

| -safemode enter | leave | get | wait | Manages safe mode, a NameNode state in which changes to the name space are not accepted and blocks can be neither replicated nor deleted. The NameNode is in safe mode during start-up so that it doesn’t prematurely start replicating blocks even though there are already enough replicas in the cluster. |

| -refreshNodes | Forces the NameNode to reread its configuration, including thedfs.hosts.exclude file. The NameNode decommissions nodes after their blocks have been replicated onto machines that will remain active. |

| -finalizeUpgrade | Completes the HDFS upgrade process. DataNodes and the NameNode delete working directories from the previous version. |

| -upgradeProgress status | details | force | Requests the standard or detailed current status of the distributed upgrade, or forces the upgrade to proceed. |

| -metasave filename | Saves the NameNode’s primary data structures to filename in a directory that’s specified by the hadoop.log.dir property. File filename, which is overwritten if it already exists, contains one line for each of these items: a) DataNodes that are exchanging heartbeats with the NameNode; b) blocks that are waiting to be replicated; c) blocks that are being replicated; and d) blocks that are waiting to be deleted. |

| -setQuota <quota> <dirname>…<dirname> | Sets an upper limit on the number of names in the directory tree. You can set this limit (a long integer) for one or more directories simultaneously. |

| -clrQuota <dirname>…<dirname> | Clears the upper limit on the number of names in the directory tree. You can clear this limit for one or more directories simultaneously. |

| -restoreFailedStorage true | false | check | Turns on or off the automatic attempts to restore failed storage replicas. If a failed storage location becomes available again, the system attempts to restore edits and thefsimage during a checkpoint. The checkoption returns the current setting. |

| -help [cmd] | Displays help information for the given command or for all commands if none is specified. |

27 Friday Feb 2015

Posted in BigData

Tags

Ambari, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, running hortonworks, [Edit]Tags10 big data

Refer this URL to load data to the sand box.

a = LOAD ‘default.nyse_stocks’ USING org.apache.hive.hcatalog.pig.HCatLoader();

b = GROUP a BY stock_symbol;

c = FOREACH b GENERATE FLATTEN(a.(stock_symbol)), MAX(a.stock_price_high), MIN(a.stock_price_low);

d = DISTINCT c;

dump d;

description –

a holds data from nyse_stock table from Hive

b holds records group by stock_symbol

c gets max, min stock for each stock_symbol and flattens

d gets unique records for each stock_symbol

and dumps;

27 Friday Feb 2015

Posted in BigData

Tags

Ambari, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, running hortonworks, [Edit]Tags10 big data

Refer this URL to load data to the sand box.

a = LOAD ‘default.nyse_stocks’ USING org.apache.hive.hcatalog.pig.HCatLoader();

b = FILTER a BY stock_symbol == ‘IBM’;

c = GROUP b ALL;

d = FOREACH c GENERATE AVG(b.stock_volume);

DUMP d;

description –

a holds data loaded from hive table.

b holds records filtered by stock_symbol==’IBM’ condition.

c groups all records of b i.e. group all records like group by

d holds average which is calculated by iterating each group and calculating average

27 Friday Feb 2015

Tags

Ambari, big data analytics, big data architecture, big data certification, big data cloud, big data concept, big data course online, big data download, big data example, big data for beginners, big data for dummies pdf, big data hadoop, big data hive, big data news, big data ppt, big data problems, big data programming, big data tools, big data tutorial, big data university, BigData, bigdata and hadoop training, BigData VM, Cloudera VM, download VM, Falcon, File System, Hadoop, HBase, HDFS, HDP, Hive, Hive-Hcatalog, hortonworks, Hortonworks VM, Hue, IBM BigInsight VM, Java, Jive, Knox, MapReduce, Oozie, Oracle Bigdata Lite, Pig, running hortonworks, [Edit]Tags10 big data

Open hortonworks VM

Type “mysql” press enter

mysql>create database demo;

mysql>create user ‘demo’@localhost’ identified by ‘welcome1’;

mysql>grant all priviledges on *.* to ‘demo’@’localhost’;

Above commands will create a database demo.

Also create an user demo with password welcome1.

It will grant all the privileges to the user.

We will use this database for SQOOP exercises later.